Navigate counseling ethics: key changes and best practices

The 2014 ACA Code of Ethics is still the governing standard in 2026, even as artificial intelligence reshapes client interactions, federal policy disrupts DEI training, and a draft 2026 code awaits final adoption. For many counselors, this gap between what exists on paper and what’s happening in practice creates real confusion. Knowing which rules apply right now, which changes are coming, and how to document your reasoning is no longer optional. This guide breaks down the most critical shifts in counseling ethics, from ACA code revisions to AI guidelines to CACREP accreditation changes, so you can practice with confidence.

Table of Contents

- Recent updates to the ACA Code of Ethics

- Impacts of DEI standard suspensions in counselor education

- AI in counseling: Ethical frameworks and risks

- Common pitfalls: AI ethical violations in practice

- Applying new ethical guidelines in your counseling practice

- Counseling ethics: Why clarity matters more than compliance

- Advance your ethical counseling with Mastering Conflict

- Frequently asked questions

Key Takeaways

| Point | Details |

|---|---|

| ACA code updates | The 2014 ACA Code remains the standard, but a draft 2026 update is underway addressing new ethical dilemmas. |

| DEI standard suspension | Some DEI requirements for counselor training were suspended in 2025 due to legal uncertainties and policy changes. |

| AI ethics consensus | Leading organizations now require human oversight, informed consent, and focus on client safety when using AI. |

| Real-world AI violations | Empirical studies show AI can miss key ethical standards in counseling, demanding vigilant human oversight. |

| Practical strategies | Apply up-to-date guidelines, document your reasoning, and prioritize transparent communication with clients. |

Recent updates to the ACA Code of Ethics

The ACA Code of Ethics is the foundation most counselors build their practice on. Right now, the 2014 code remains in effect, with a draft 2026 version open for public comment until April 24, 2026. That draft is not yet law. Until it is formally adopted, your ethical obligations are governed by the 2014 standards.

This matters more than it sounds. The 2026 draft was created specifically to address gaps the 2014 code never anticipated. Technology, social change, and legal challenges have all moved faster than any ethics committee could have predicted. The new draft takes on:

- Artificial intelligence and automated tools in client care

- Data privacy and digital record-keeping obligations

- DEI considerations and culturally responsive practice

- Crisis care protocols in remote and hybrid settings

- Informed consent for technology-assisted services

For practitioners right now, the practical question is: how do you use the 2026 draft without violating the 2014 code? The answer is that you can use the draft as a guide for your own reasoning and documentation, but you cannot cite it as your binding authority. Think of it as a preview of where the profession is heading.

“The counselor’s ethical obligation is not just to follow a code, but to reason through situations where codes are silent or in transition.”

Public comment periods like the current one are rare opportunities to influence the final document. If you work in clinical supervision or specialize in a population not well represented in the 2014 code, your input can shape the final version. Understanding what mental health counseling demands in 2026 means engaging with these processes, not just waiting for the result.

Impacts of DEI standard suspensions in counselor education

Understanding broader code revisions, counselors must also keep pace with fast-evolving educational and accreditation requirements. In September 2025, CACREP suspended enforcement of specific DEI-related accreditation standards, effective September 15, 2025. The reason: federal guidance on unlawful discrimination and shifting political priorities forced the organization to pause enforcement while it determines how to stay legally compliant.

This does not mean DEI training is banned. It means specific accreditation benchmarks tied to DEI outcomes are no longer being enforced during this period. Programs are navigating a real tension between federal compliance and their professional obligation to prepare culturally competent counselors.

| Area affected | What changed | What stays the same |

|---|---|---|

| Accreditation standards | Specific DEI benchmarks suspended | Core clinical training requirements |

| Curriculum content | Programs have more discretion | Ethical competency expectations |

| Faculty diversity requirements | Enforcement paused | Institutional policies still apply |

| Student recruitment | DEI-focused criteria under review | Merit-based admissions continue |

For working counselors, this creates a gap. New graduates from programs that reduced DEI content may enter the field less prepared for supervision models for diverse needs. This puts more pressure on supervisors and employers to fill that training gap after graduation.

The suspension also raises questions about culturally centered healing and whether clients from marginalized communities will receive the same quality of care. Ethical counselors are not waiting for accreditation rules to tell them how to practice. They are maintaining their own continuing education, seeking culturally informed supervision, and staying current with community-specific research even when formal requirements are paused. Some organizations have also turned to affordable healthcare ethics frameworks to think through equity in service delivery.

Pro Tip: If your program or organization reduced DEI training due to the suspension, build your own professional development plan to close that gap. Your clients’ needs have not changed.

AI in counseling: Ethical frameworks and risks

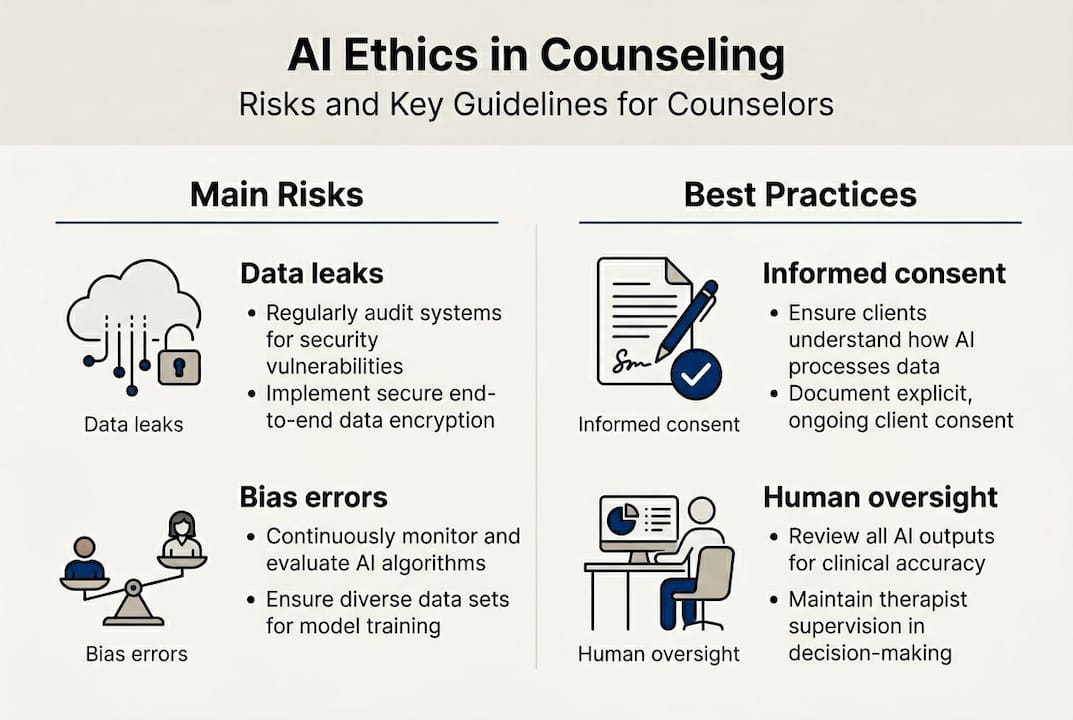

Alongside education, technology is rapidly reshaping what counselors must consider in their day-to-day practice. Multiple major organizations have now issued formal AI guidelines, and the consensus is clear: human primacy and informed consent are non-negotiable, regardless of which tool you use.

Here is how the major organizations compare:

| Organization | Key focus areas | Unique emphasis |

|---|---|---|

| NBCC | Human relationship primacy, competency | Counselor accountability for AI outputs |

| ACA | Informed consent, data privacy | Prohibition on AI for crisis or diagnosis |

| AMHCA | Client protection, bias prevention | Mental health-specific risk management |

| NAADAC | Addiction-context risks, consent | Vulnerability of substance use populations |

| AASCB | Data security, professional oversight | Board-level compliance expectations |

All five organizations agree on a few core points. AI cannot replace the therapeutic relationship. Clients must be informed when AI tools are used. Counselors remain accountable for any AI-generated content or recommendation. Data security is a shared obligation between the counselor and the platform they use.

The risks are not theoretical. Confidentiality in counseling becomes complicated when client data passes through third-party AI systems. Many popular tools do not meet HIPAA standards by default. Counselors who assume a tool is compliant without verifying are taking on significant liability.

For risk prevention, the most important step is documentation. Before using any AI tool with clients, document your rationale, the tool’s data policies, and how you obtained informed consent. Staying current on AI transparency and ethics is also part of responsible practice.

Pro Tip: Always supervise AI use with robust human oversight. Review every AI-generated summary or recommendation before it influences your clinical decisions.

Common pitfalls: AI ethical violations in practice

Ethical frameworks are only as strong as their application, so let’s look at real-world risks and solutions. Research shows that AI systems violate mental health ethics in systematic ways, including lack of clinical context, deceptive empathy, bias in responses, and poor handling of crisis situations. In one practitioner-informed study, researchers identified 15 distinct ethical failures across 5 themes in just 137 AI counseling sessions.

That number should get your attention. Fifteen failures in 137 sessions is not a rare edge case. It is a pattern.

The most common violations counselors encounter include:

- Lack of clinical context: AI tools do not know your client’s history, diagnosis, or prior sessions unless you input it, creating responses that miss critical nuance.

- Deceptive empathy: AI language can mimic warmth without genuine understanding, potentially misleading clients about the nature of the interaction.

- Bias in outputs: Training data reflects societal biases, which can produce culturally insensitive or clinically inappropriate responses.

- Crisis mishandling: AI tools frequently fail to recognize escalating risk or provide appropriate safety planning.

- Overconfidence in recommendations: AI may present suggestions with false certainty, undermining the counselor’s clinical judgment.

For trauma-informed counseling, these risks are amplified. Clients with trauma histories are especially vulnerable to misattunement, and an AI tool that misreads emotional cues can cause real harm. Any clinical services context involving high-risk populations should treat AI as a background administrative tool only, never a front-line clinical resource.

Pro Tip: Never use AI for crisis response or unsupervised client interaction. If a client is in distress, human judgment is the only appropriate response.

Applying new ethical guidelines in your counseling practice

Now, let’s translate guidance into practical steps for your practice. The core challenge is knowing when to rely on the existing 2014 standards and when to begin incorporating 2026-forward thinking into your documentation and client communication.

Here is a working framework:

- Default to the 2014 code for all binding ethical decisions until the new code is formally adopted.

- Use the 2026 draft as a reasoning tool when you face situations the 2014 code does not address, especially around AI and technology.

- Document your ethical reasoning explicitly whenever you encounter a gray area. Write down what standard you applied and why.

- Obtain informed consent for any technology-assisted service, including telehealth platforms, AI note tools, or digital assessments.

- Conduct regular bias checks on any AI tool you use. Ask: does this tool perform equitably across the populations I serve?

- Stay current through continuing education, especially in AI ethics, cultural competency, and crisis care protocols.

The ACA recommendations for AI use are specific: obtain informed consent, avoid using AI for crisis or diagnostic purposes, stay aware of bias and DEI limitations, and maintain full counselor accountability for all outcomes. These apply now, under the 2014 code, because they fall within existing competency and client welfare standards.

For teletherapy best practices, the same logic applies. Technology changes the medium, not the obligation.

Pro Tip: Regularly review your tools and documentation for compliance. Set a calendar reminder every 90 days to audit your AI tool policies and update your consent forms.

Counseling ethics: Why clarity matters more than compliance

Here is an uncomfortable truth: most ethical violations in counseling do not happen because a counselor chose to do wrong. They happen because the counselor was not clear, either with themselves or with their client, about what they were doing and why.

Compliance is a floor, not a ceiling. Checking a box that says you obtained informed consent is not the same as having a real conversation with a client about how their data is used or what an AI tool can and cannot do. The difference matters enormously when something goes wrong.

Rapidly shifting standards, from CACREP suspensions to draft code revisions, create a temptation to wait for clarity before acting. But waiting is itself an ethical choice. The counselors who navigate this period well are the ones who document their reasoning, communicate transparently with clients, and seek clinical supervision when they face genuine uncertainty.

The most important ethical tool you have is not a code. It is your ability to think clearly, explain your decisions, and stay accountable. That does not change regardless of which version of the ACA code is in effect.

Advance your ethical counseling with Mastering Conflict

Staying current with shifting ethical standards takes more than reading updates. It takes structured support, experienced guidance, and a community that takes professional accountability seriously.

At Mastering Conflict, Dr. Carlos Todd offers clinical supervision and clinical mentoring designed specifically for counselors navigating complex ethical terrain. Whether you are working through AI integration, managing documentation in a shifting regulatory environment, or building culturally competent practice, these programs give you a framework grounded in evidence-based, real-world experience. If you are serious about practicing at the highest ethical standard in 2026 and beyond, these resources are built for that work.

Frequently asked questions

What is the current ACA Code of Ethics in 2025?

The 2014 ACA Code of Ethics remains in effect until the new draft is formally adopted, which is expected no earlier than late 2026. Counselors must use the 2014 standards as their binding authority.

How have DEI requirements changed in counselor education?

CACREP suspended enforcement of specific DEI-related accreditation standards in September 2025, creating flexibility for programs but also gaps in cultural competency training that supervisors and employers may need to address.

Can counselors ethically use AI tools with clients?

Yes, but only under strict conditions: informed consent, no crisis or diagnosis use, awareness of bias, and full counselor accountability for all AI-generated content or recommendations.

What are the main risks of using AI in counseling?

AI systems can violate ethics through lack of clinical context, deceptive empathy, cultural bias, and poor crisis handling, making human oversight essential in every AI-assisted interaction.

Recommended

- Master trauma-informed counseling: 67% of clients improve – Mastering Conflict

- Family counseling basics: practical guide to improve communication – Mastering Conflict

- Teletherapy Best Practices: Effective Online Counseling – Mastering Conflict

- Understanding Confidentiality in Counseling – Mastering Conflict